Interactive WebAssembly Version

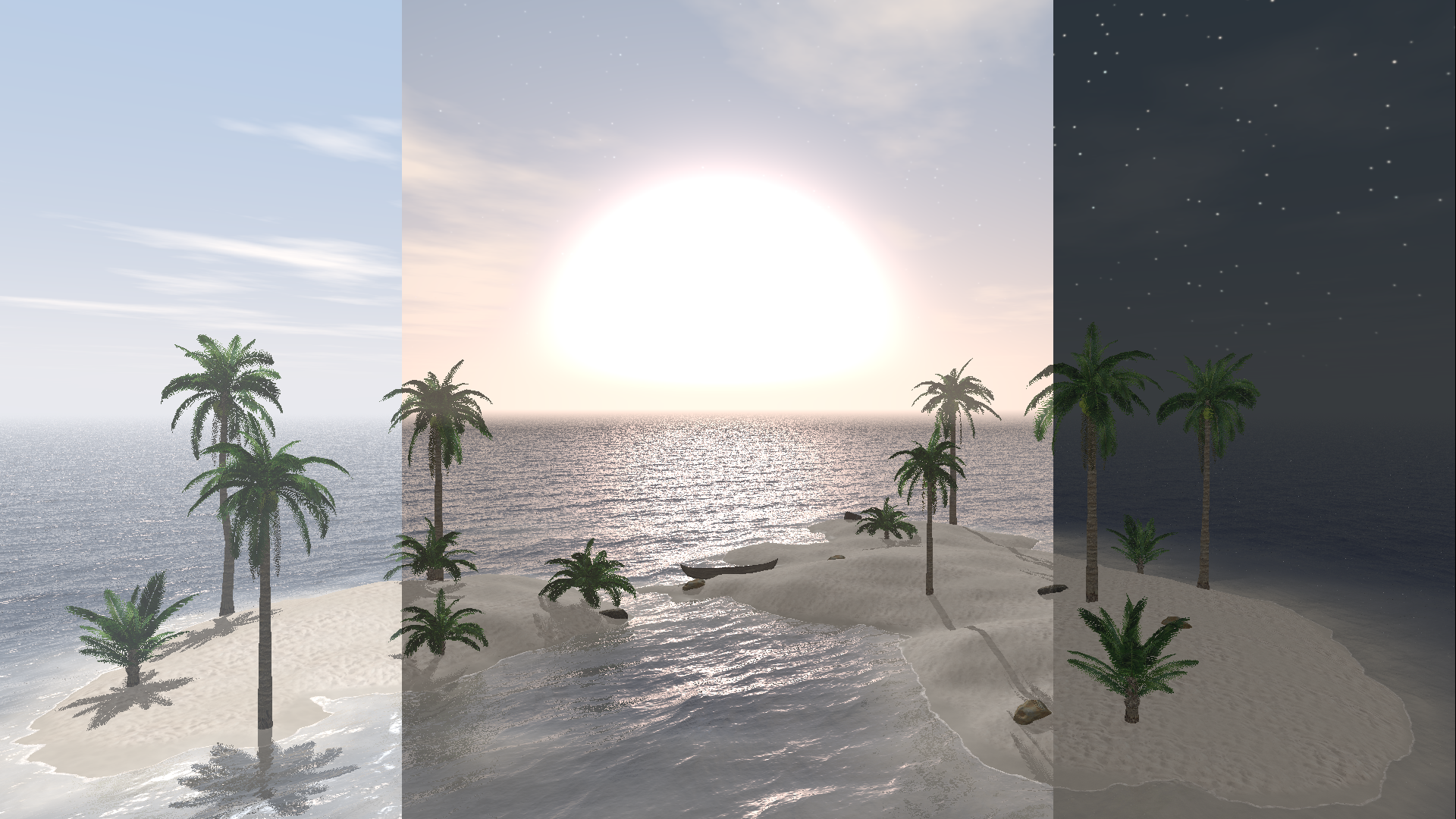

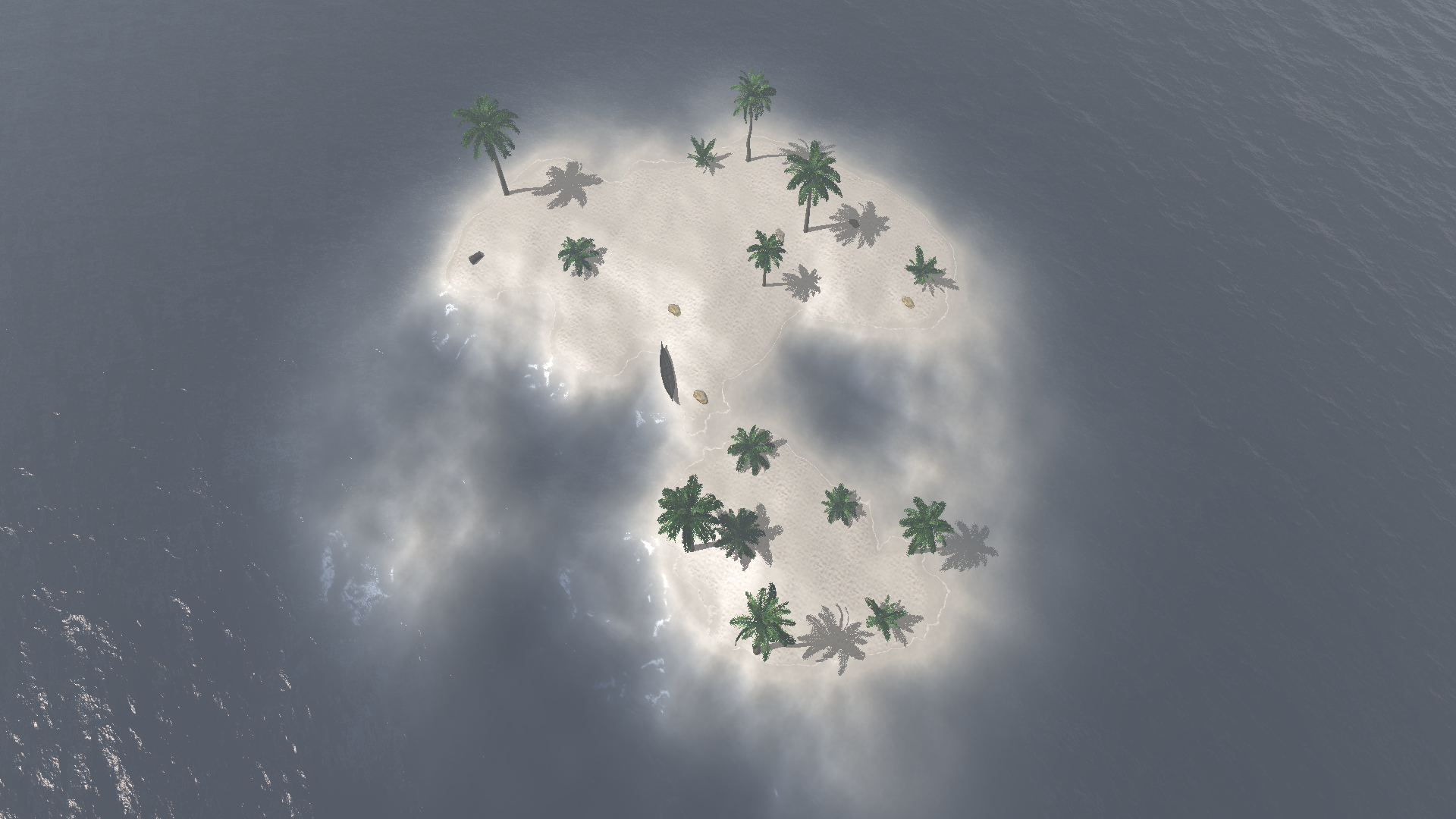

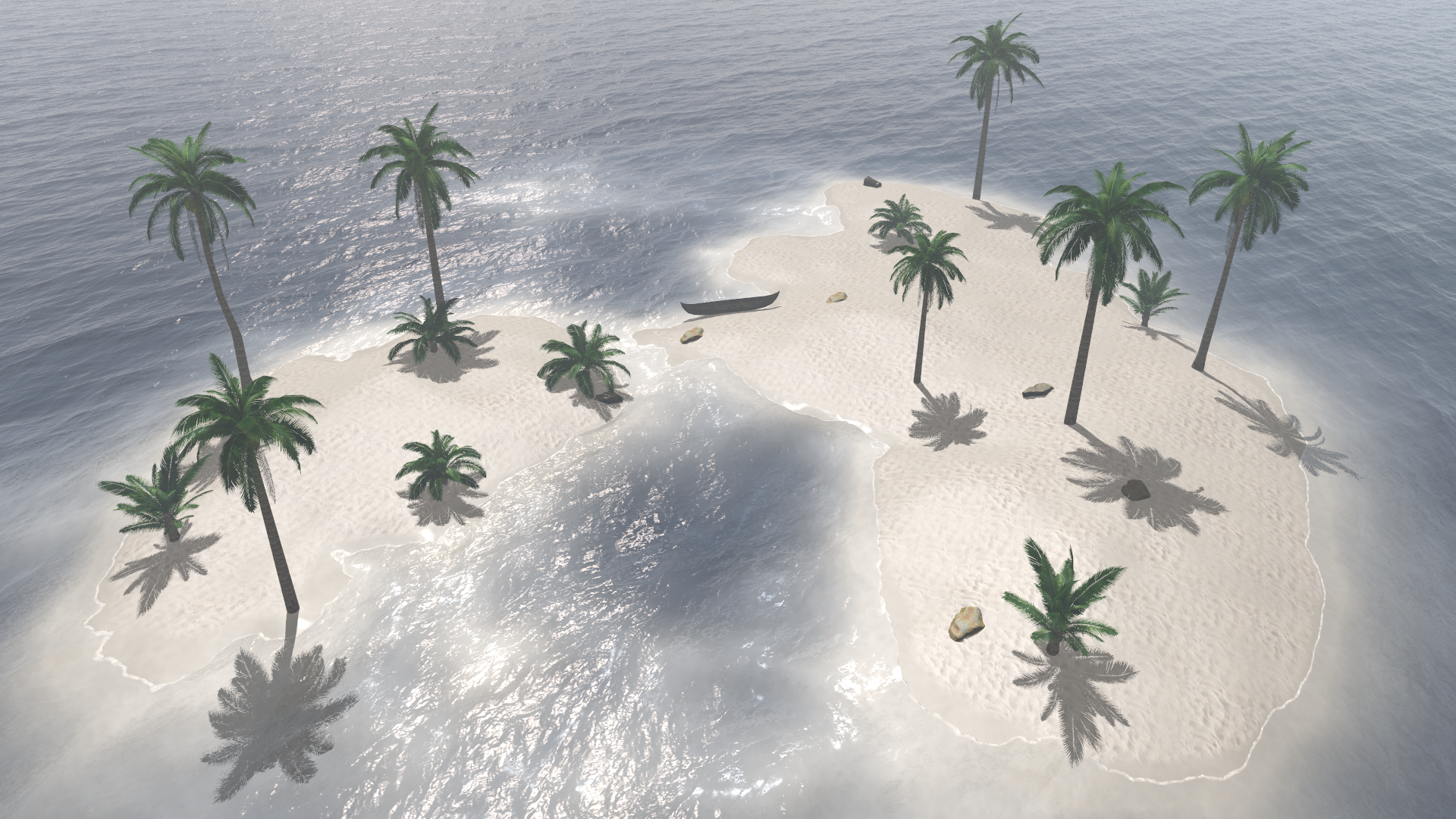

One says an image is worth more than thousand words. Pursuing this path even further, we thought that running our ray tracer at interactive framerates would be worth more than a thousand images. With the help of Emscripten, we ported the engine to WebAssembly. In order to make use of multiple cores, our wrapper creates multiple web workers that receive jobs from the main program. When standing still, a high-quality (720p) rendering is triggered.

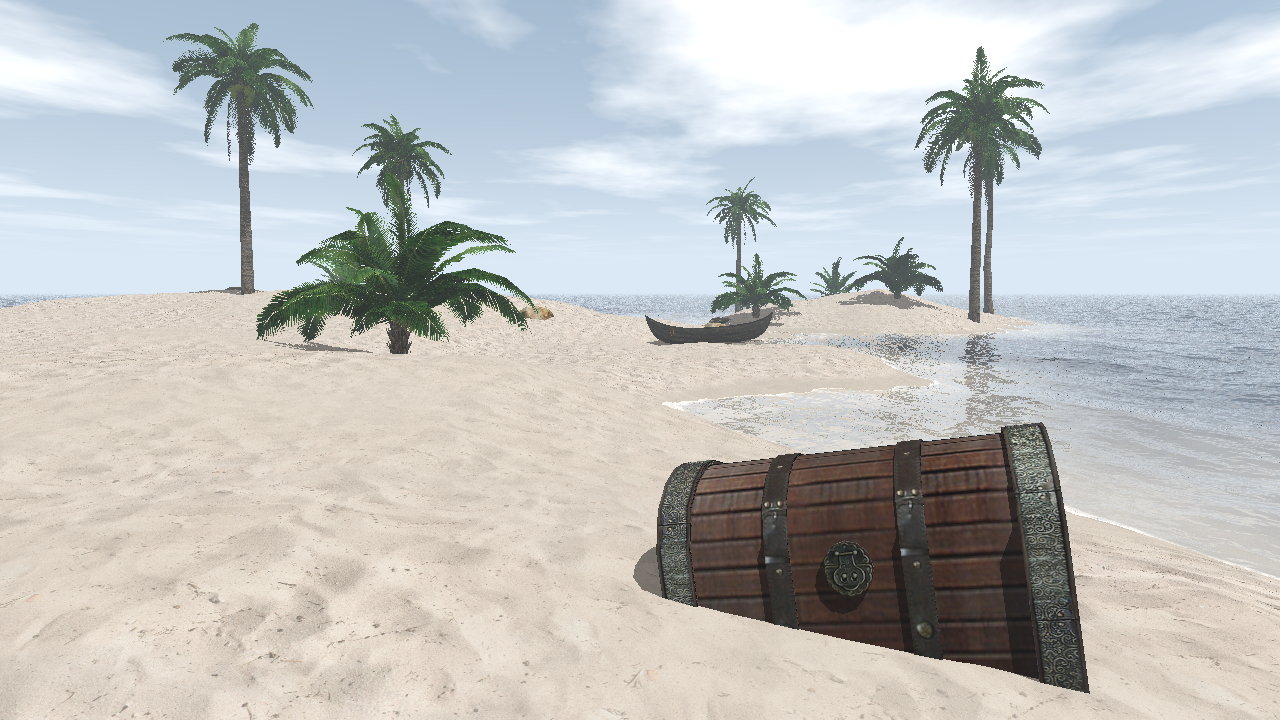

There is a little easter-egg somewhere on the map. Can you find it?

Warning: Running this may crash your browser if your machine is not powerful enough.

| Action: | Move | Height | Time | Randomize |

| Keys: | WASD |

EQ |

RF |

? |

Note that this version consumes way more memory than the native one, since each web worker

has to load the scene and textures independently. We could have used

SharedArrayBuffer to reduce memory usage (and probably improve performance

a little bit), however this is currently disabled by default on most browsers due to

security reasons.