Raytraced

Raytraced

Physically Correct Memes.

|

|

|

|

Concept

The scene

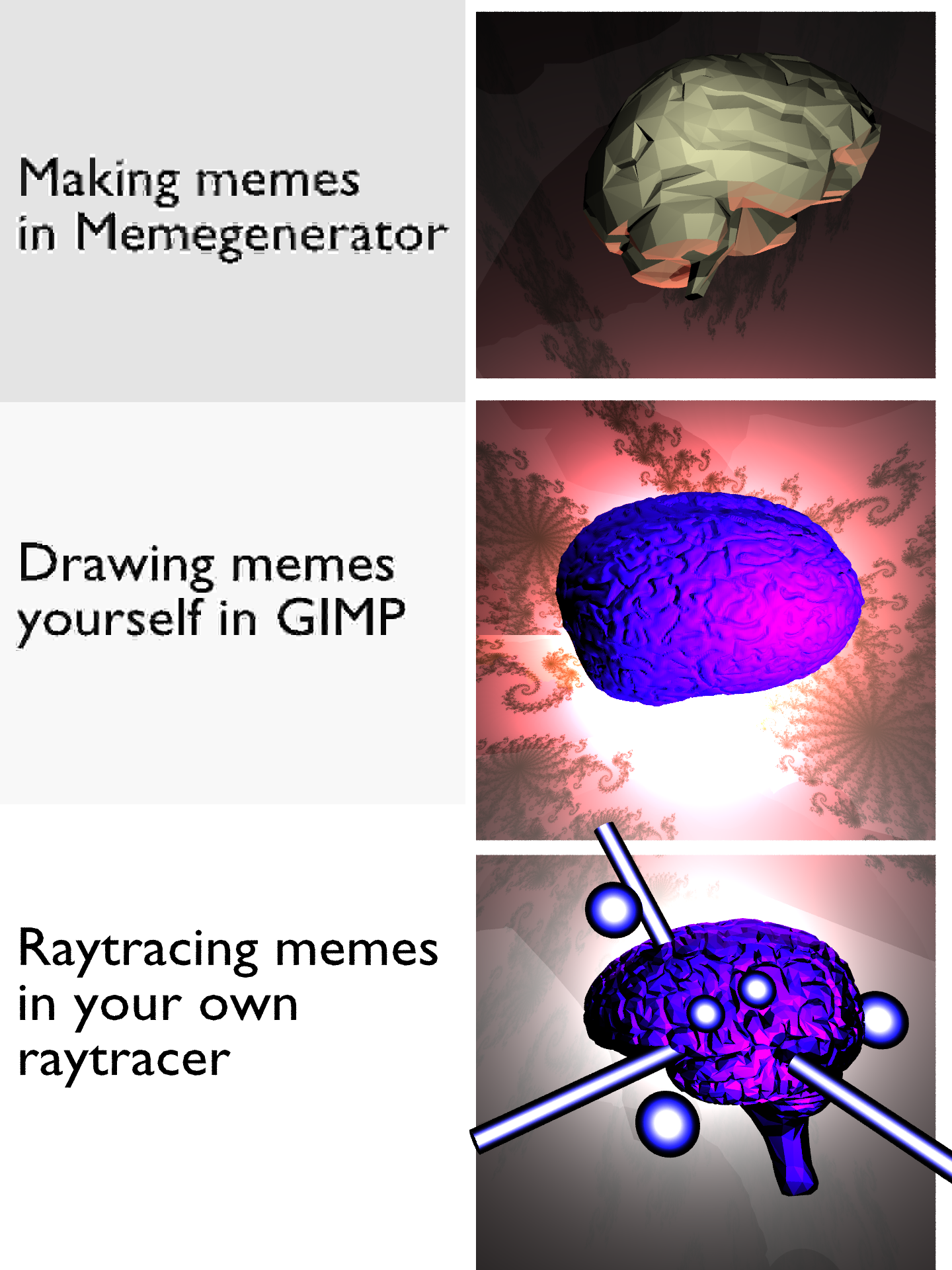

Our scene consists of two columns and three rows. In the left column, the text for each of the three stages of the galaxy brain meme is displayed using 3d models made in blender. On the right side we rendered three diffrent brain models with different lightning and elements with increasingly elaborate lightning. Also, with each level of the meme, the brain model we used is more detailed.

Why raytrace a meme

We both did not know how to use blender or other 3d modelling software so we wanted to make something simple that everyone can relate to which is why we decided to raytrace an entire meme, even the text you see on the image. This text is done by creating the appropriate 3d models in blender. Memes, albeit being very popular in the last decade in the form of image macros on the internet, are a much older concept than one might think.

The term was coined by evolutionaly scientist Richard Dawkins in hist 1976 book "The Selfish Gene" and is used to describe a "memory-gene". A kind of thought that is self replicating and tries to maximize its survival. Therefor, memes are created in a way that they are easily understandable by everyone.

Features

JPEG Artifacts

Our image uses aliasing artifacts on the left side where text is placed. This adds to the authenticity of the image, since memes are often downloaded, edited and shared again, where each time more and more jpeg artifacts are added to the image. To achieve this, we perfom discrete cosine transforms to certain regions of the image, such that for each stage of the meme there are less and less artifacts. The discrete cosine transform works similarly to the discrete Fourier transform, but it only considers the cosine terms in Fourier space. JPEG uses this technique to save space while encoding images, but we directly convert back into the color domain, just to introduce the compression artifacts.

Glowing Material

To render the light beams and spheres emitted by the brain we implemented a 'Glow' material. Upon ray intersection it simply samples another ray in the same direction from the intersection point; the same is true, if that new ray hits the primitive from the inside. This yields the color behind the intersection point, making the solid transparent. By adding emission, depending on a color and the angle between hit and normal, the solid will appear decreasingly transparent towards the center.

Binning SAH BVH

Since we included various models containing multiple thousands of triangles, we decided to implement a bounding volume hierarchy using the surface area heuristic with binning. This brought our total rendering time down to under 10 minutes.

Images

Low resolution (360 x 480)

High resolution (1440 x 1920)

Funny artifacts

While experimenting with different values for the discrete cosine transform this was one of the results:

Galaxy Brain

Galaxy Brain Meme on KnowYourMeme.com

Facts

Faces: 106.979

Rendering time: 547 seconds; 9,18 minutes

Authors

Adrian Dapprich

Tim Maurer

Resources

Images

Images